This is how we do SEO for developer tools: A complete guide

The exact SEO process Hackmamba uses for devtools. Content inventory, keyword research, hub and spoke architecture, link building, and GEO monitoring in one guide.

The question we get asked the most now is: "Hackmamba, can you help us with GEO and AEO?"

Every devtool company wants to show up in ChatGPT, Perplexity, and Google AI Overviews. The answer is not a separate GEO or AEO strategy. It is SEO done right.

Google confirmed this officially on May 15, 2026:

From Google Search's perspective, optimizing for generative AI search is optimizing for the search experience, and thus still SEO.

In this guide we cover exactly how we do SEO for developer tool companies at Hackmamba - the process, the tools, and what the work looks like at each stage.

GEO (Generative Engine Optimization) is the practice of getting your content cited by AI tools like ChatGPT and Perplexity. AEO (Answer Engine Optimization) is the practice of structuring content to be extracted as a direct answer in AI-generated responses.

As of April 2026, Google is the second most cited domain in LLMs at 21.92%, right behind the number one spot - Youtube (see image below). That means the content Google ranks is the content LLMs are pulling answers from.

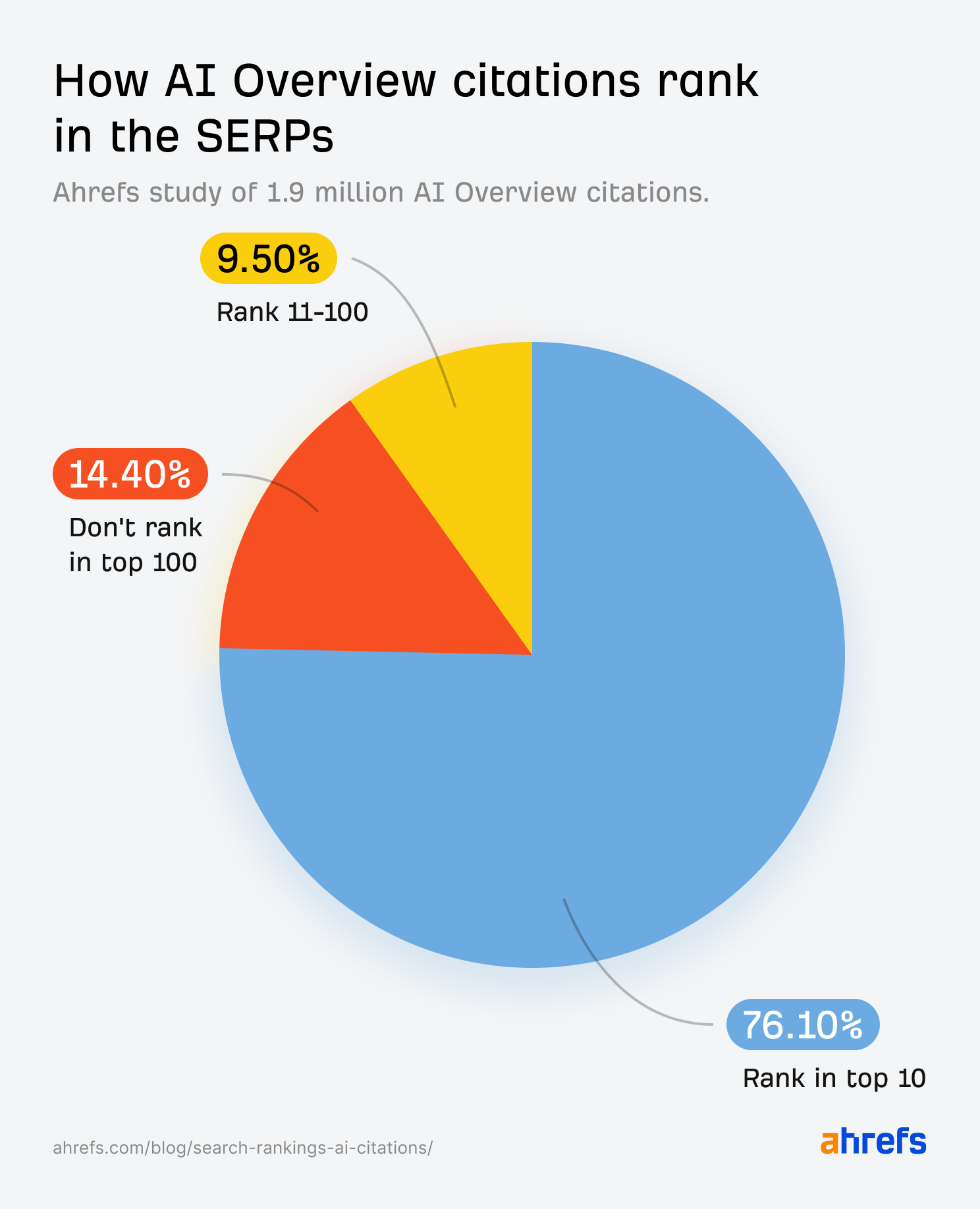

Ahrefs confirmed the same pattern for AEO, 76.1% of URLs cited in Google AI Overviews already rank in the top 10 of Google search results. The content showing up in AI answers did not get there through a separate strategy. It earned its place through SEO first.

For both GEO and AEO, SEO is the baseline. Everything else follows.

Also, note that SEO is one component of a broader developer marketing program. What is developer marketing covers how SEO/AEO fits alongside technical content, DevX, DevRel, distribution, and GTM -so you can see where the SEO investment compounds with the rest of the program.

When a devtool company comes to us, the first question we ask is simple: do you have existing content or are you starting from zero? The answer determines where we begin.

Step 1 - content inventory

Most agencies start with keyword research. We start by understanding what already exists.

If a devtool company comes to us with existing content, the first thing we do is a full content inventory. Building a content strategy without knowing what you already have leads to duplicate articles, cannibalized keywords, and pages that have never seen a single click.

Step 2 - audience research

Most devtools content fails because it is written for "developers" as a category. That is too broad to be useful. A DevOps engineer at a Series B startup has completely different problems from a solo backend developer building a side project. Writing for both at the same time means you speak clearly to neither.

We build personas before we touch keywords or content. The persona defines who we are writing for, what they care about, and what is blocking them. Everything else follows from that.

The framework we use: Jobs to be done

A persona without a job is just a demographic profile. It tells you who the person is but not why they would ever care about your product.

Jobs to be done flips the question. Instead of asking "who is this developer," we ask "what job are they trying to get done, and what is getting in the way?" A DevOps engineer is not searching for "observability tool." They are trying to stop getting paged at 3am because something broke silently in production. That is the job. That is what the content needs to speak to.

The question we ask for every client: what job is the developer hiring this product to do, and where are they failing to get it done today?

From pain points to seed keywords

Each persona's frustration maps directly to a search query. That query becomes the seed keyword we take into research.

| Persona | Pain Point | Seed Keyword |

|---|---|---|

| DevOps Engineer | Alert fatigue and poor observability | "Kubernetes alert noise" |

| Solo Backend Developer | Docs assume too much prior knowledge | "[product] Node.js quickstart" |

| Engineering Lead | Getting buy-in for new tools | "devtools evaluation enterprise" |

Our private Apify workflow runs on these seed keywords, scraping Reddit, GitHub issues, and Stack Overflow using the exact language each persona uses. The output is a structured dataset of developer language that feeds directly into keyword research.

Step 3 - keyword research

We use Ahrefs. Every seed keyword from Step 2 goes in along with the developer language the Apify workflow surfaced. We expand each one, look at what related terms are surfacing, and pull everything into clusters by topic

The output of this step is what we call the keyword universe. This keyword universe gets crosschecked with the content inventory we built in Step 1.

This is how it works:

| Status | What it means | What we do |

|---|---|---|

| Content exists, not ranking | Google sees no value in it | Audit it. In most cases we rewrite it from scratch and redirect the old URL to the new one |

| Content exists, ranking below position 10 | Has potential, needs a push | Run it through Surfer SEO optimization - covered in Step 6 |

| Content exists, ranking in top 10 | Working | Protect it, build internal links to it, expand the cluster around it |

| No content exists | Gap in the plan | Prioritize it in the content calendar |

Why we start with long-tail keywords

Most developer tool companies have a low DA when they come to us. Going after high-volume, high-difficulty keywords from day one means you will not rank and you will not get cited.

Long-tail keywords are where devtool companies win early. "API rate limit backoff strategy Node.js" has lower volume than "API tools" but the developer searching for it knows exactly what they need. They are closer to a decision, they convert better, and because specificity narrows the competition, a site with modest authority can realistically rank for it.

The prioritization logic we use:

| Priority | Signal | Example |

|---|---|---|

| Start here | Low KD, clear buying intent | "[product] alternatives", "webhook retry logic Node.js" |

| Build towards | Medium KD, high volume, TOFU | "reduce alert noise Kubernetes" |

| Earn over time | High KD, broad category terms | "best observability tools for microservices" |

High KD broad terms come later once the cluster has authority built around it through spoke content and backlinks.

Step 4 - content architecture and strategy

We use the hub and spoke model for every client. It is an effective way to build topical authority systematically.

The hub page covers a broad topic. The spoke pages go deep on each subtopic inside that cluster. Every spoke links back to the hub. The hub links to every spoke. Related spokes link to each other.

What this looks like in practice

For an observability tool: Hub becomes: “What is Observability and Why It Matters for Modern Engineering Teams”

Spokes:

- How to Reduce Alert Noise in Kubernetes

- API Error Rate Alerting Setup

- Distributed Tracing Tools for Microservices

- OpenTelemetry Getting Started Guide

- Best Observability Tools for DevOps Teams

Google reads this as topical authority. Not one page about observability but an entire web of content covering every angle of it. When ChatGPT or Perplexity gets asked about observability tools, it pulls from multiple pages covering the same topic from the same domain. That is what gets you cited.

The internal linking rule

Every spoke links to the hub and to at least two related spokes. The hub links to every spoke. This is what signals to Google that these pages belong to the same topic territory.

For clients who had an existing content strategy, we run our internal linking audit before building any new cluster. It surfaces orphan pages, maps existing anchor text, and shows where link equity is going. Building new architecture on top of a broken link structure compounds the problem.

Step 5 - content types for devtools

Not every piece of content serves the same purpose. Before we write a single brief, we map each keyword from the universe to a content type. The keyword intent tells us the format. Getting the format wrong means the content will not rank even if the keyword is right.

Six content types work for devtool companies:

1. Comparison and alternatives pages

A developer searching for "[product] alternatives" or "[A] vs [B]" is already evaluating options. We prioritize these early in the content plan regardless of volume because the conversion rate is high and LLMs cite these pages heavily when recommending tools. Omniscient Digital's analysis of 23,000+ AI citations found that LLMs cite comparison and listicle content constantly because the format mirrors how people actually evaluate options - 40.86% of commercial queries cite this content type.

2. Educational guides and tutorials

The majority of the keyword universe for any devtools company is informational. A developer stuck on a specific problem searches for a solution. These pages capture long-tail keywords, build trust before the developer considers buying, and feed the top of the funnel consistently over time.

3. Feature and use case pages

These map directly to what the product does. "How to set up rate limit alerting with [product]." The developer already knows the product exists and wants to know if it solves their specific problem. We treat these as bottom of funnel pages and optimize them for conversion alongside rankings.

4. Data-driven content

Original research earns backlinks without outreach. A survey, a benchmark study, an analysis of usage patterns. Other writers cite it. LLMs cite it. Ahrefs said it best from their own experience:

Something that we've found effective at Ahrefs is original research. Our SEO Pricing page — one of our most cited pages - works because it's based on an original survey of 439 people. We're the primary source of that data.

We recommend at least one data-driven piece per quarter for clients who want to build domain authority faster

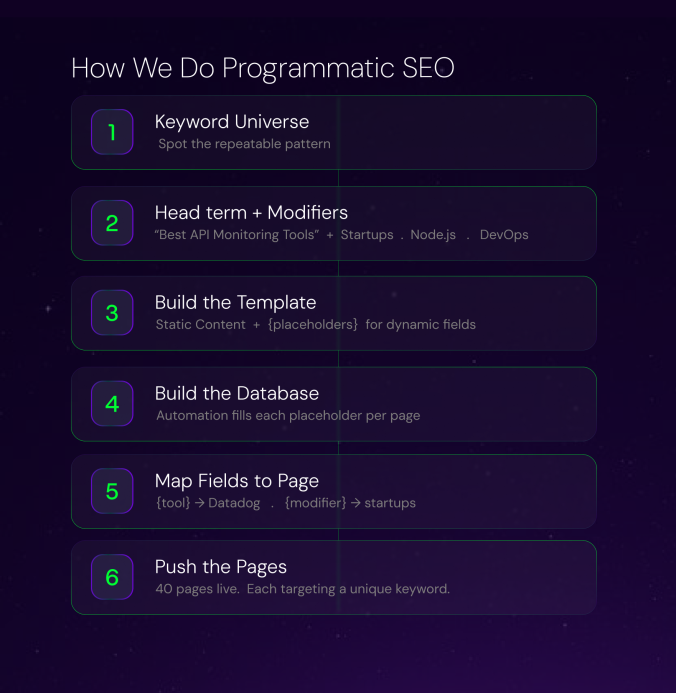

5. Programmatic pages

When the keyword universe has a repeatable pattern, "best [product category] for [use case]" across dozens of combinations, we cover it programmatically. Each page targets a specific long-tail keyword. This is how you scale coverage across an entire cluster without writing every page from scratch.

6. Micro SEO tools

Free utilities developers actually use. A JSON formatter, a cron expression generator, a regex tester. We think about these as passive link magnets. Developers find them useful, share them, and link to them without any outreach needed. They also bring in developers who have never heard of the product and keep them on the domain longer than any blog post would.

We have covered how Postman and Algolia use these micro SEO tools in our developer marketing strategy guide.

Step 6 - content creation and SEO optimization

Once the keyword universe and content architecture are locked, we move to briefs and creation.

We create all content in Boki (our AI-native content marketing platform for assigning briefs, tracking content status, distribution etc). Every brief is built around the target keyword, the persona, the pain point, and the content type mapped in the keyword universe. The writer knows exactly who they are writing for and what job the content needs to do.

After the content is written, every piece goes through Surfer SEO before it gets published. We check NLP terms, content score, and how the piece benchmarks against what is currently ranking. If the content score is not where it needs to be, it does not go live.

This is a non-negotiable step in our process. Surfer tells us whether the content is optimized enough to compete for the keyword it is targeting.

The order is fixed: brief in Boki, write, Surfer pass, publish. Every time.

Step 7 - backlink strategy

Backlinks are still one of the strongest ranking signals Google uses. For devtool companies with a low DA, the backlink strategy determines how fast the content plan compounds.

We split this into three plays.

Note: Every backlink and citation we acquire is genuine and contextually relevant to the topic it sits in. Google's May 2026 guide confirmed that inauthentic mentions get filtered out by spam systems. We do not build links that look manufactured.

Play 1 - high-intent keywords go on high-DA sites

"Best SERP APIs," "Top GraphQL tools," "[product] alternatives" - publishing these on a site with low authority is a waste. Google will not rank them and in some cases will deindex them. We publish this content as placements on high-DA sites. The placement ranks. The client gets the traffic and the backlink pointing to their money page.

Where we place:

- Dev.to, Hashnode, Medium- developer-native, indexed fast, Google trusts them

- Niche devtools directories and roundups already ranking for the exact terms

Play 2 - anchor text gives context

Every placement we build has a deliberate anchor text mapped to a specific page. We decide this before the placement goes live

The ratio we use across a client's backlink profile:

| Anchor Type | Ratio | Used for |

|---|---|---|

| Partial match | 60% | Comparison pages, alternatives pages |

| Branded | 25% | Homepage, product pages |

| Exact match | 15% | Highest priority money pages only |

Exact match anchors are reserved for the pages we most want to rank. Using them everywhere dilutes the signal and looks manipulative.

Play 3 - paid citations from our network for LLM visibility

We have a network of relevant, authoritative sites we work with directly. For keywords that search engines and LLMs need to associate with a client's product, we get targeted citations placed on these sites.

Each placement mentions the product in the exact context we want it known for. When multiple independent sources describe a product the same way, LLMs pick that up and reinforce it in their outputs. This is how you influence what ChatGPT and Perplexity say about your product.

The GEO connection

Every placement does two jobs. It passes link equity to the target page for rankings. It also creates the multi-source corroboration that LLMs use to determine what to cite. The backlink strategy and the GEO strategy are the same strategy executed together.

Step 8 - technical SEO

We do not run a 25-point technical audit. We focus on what actually impacts crawlability, indexing, and structured data for devtools sites.

Sitemap XML

We segment the sitemap by content type. Blog posts, product pages, and programmatic pages each get their own sitemap. This gives Google a cleaner picture of what exists and makes it easier to spot indexing issues by section fast.

Robots.txt

Staging environments, admin pages, and duplicate parameter URLs get blocked. We make sure Google is not burning crawl budget on pages that should never be indexed.

Canonical tags

Programmatic pages create duplicate content risk. Every page gets a canonical tag pointing to the correct URL. This matters especially when content is accessible via multiple URL parameters.

Schema markup

Every content type gets its own schema:

- Article schema on blog posts and tutorials

- FAQPage schema on comparison and alternatives pages

- HowTo schema on step-by-step guides

- Organization and Product schema on the homepage and product pages

LLMs parse structured data. Getting this right directly increases citation probability in AI answers.

Core Web Vitals

Most devtools sites run on React or Next.js. The three metrics that matter:

| Metric | Target | What it measures |

|---|---|---|

| Largest Contentful Paint | Under 2.5s | How fast the main content loads |

| Interaction to Next Paint | Under 200ms | How fast the page responds to interaction |

| Cumulative Layout Shift | Under 0.1 | Visual stability while the page loads |

The most common issues we fix: images not served in WebP format, no priority loading on above-the-fold images, client-side rendering blocking LCP, and render-blocking JavaScript pushing INP above threshold. For Next.js sites, switching to React Server Components and using next/image solves most of this.

Step 9 - GEO and AEO

Developers are increasingly discovering products through AI-powered search experiences. Whether they are using ChatGPT, Claude, Gemini, Perplexity, or AI features within traditional search engines, the goal remains the same: finding reliable answers to technical problems.

The good news is that most of the work required to earn visibility in AI search is already covered in the previous steps. High-quality technical content, strong documentation, topical authority, clear information architecture, and content that answers real developer questions all increase the likelihood of being surfaced in AI-generated responses.

What changes in this step is the focus. Instead of optimizing solely for rankings and clicks, teams also need to think about how their content is cited, summarized, and referenced by AI systems. The AI search optimization guide for technical content covers practical ways to improve visibility in AI-generated results, including content depth, documentation structure, visual assets, and decision-stage content.

Just as important is understanding how developers interact with AI-generated answers. The AI search optimization and developer behavior guide explores the trust signals, validation habits, and information needs that shape how developers evaluate information surfaced by AI tools.

How we monitor AI traffic

We use a GEO tool to track sessions coming from ChatGPT, Perplexity, Claude, Gemini, and Grok separately. This tells us which pages are getting cited, which prompts are triggering those citations, and how the product is being described by each model.

Five things we do for GEO and AEO separately

1. Answer-first structure

Every section opens with a direct 2 to 3 sentence answer. LLMs extract these blocks first when assembling a response. If the answer is buried three paragraphs deep, it does not get cited.

2. Citation surface coverage

LLMs need to see your product referenced across multiple independent sources before they cite it with confidence. The placements on dev.to, Hashnode, niche directories, and your paid citation network from Step 7 do double duty here. Each placement is a corroboration signal. The more sources describe your product in the same context, the higher the citation frequency across ChatGPT, Perplexity, and Claude.

One thing Google's May 2026 guide flagged explicitly: inauthentic mentions do not work. Generic paid placements with no editorial relevance get filtered out by spam systems. Every placement needs to be contextually relevant to the topic it sits in and genuine in how it references the product. Citation building that looks manufactured gets ignored at best and penalised at worst.

3. Entity consistency

The product name, company name, and core use cases get referenced consistently across every page on the site and across every third-party placement. An About page, a GitHub README, and an API spec all pointing to the same Organization schema entry give AI engines confidence to merge those references and surface the brand as an authoritative node in generative answers.

4. Original data and statistics

Original research, verifiable statistics, and data-driven insights get cited more frequently than unsupported opinions. AI models priorities factual, well-sourced information. We push clients to publish at least one data-driven piece per quarter specifically for this reason.

5. One rule across all content types

Google's May 2026 guide flagged this as the single biggest visibility lever for generative AI features: content needs a unique point of view. Content that restates what ten other pages already say does not get cited. For devtools companies this means writing from real implementation experience, actual client data, and opinions only your team can have. Format is secondary. Perspective is what gets you cited

6. Quarterly content refresh

Pages that go stale lose citation frequency. We build a 90-day refresh cycle into every engagement. Updated publish dates, new data, expanded sections. This signals to both Google and LLMs that the content is current and maintained.

Wrapping up

We have used this SEO framework with devtools companies including Cloudinary, Flutterwave, Roadmap, Doppler, and Bitcloud.

Cloudinary increased organic traffic by 88% in five months using a hub-and-spoke content strategy focused on developer search intent. Read the full case study.

Roadmap achieved similar results, growing organic traffic from 240,000 to 480,000 monthly visits over 24 months through a structured hub-and-spoke content program.

Traffic growth is only one part of the equation. The real goal is understanding how organic discovery contributes to developer adoption, product usage, and revenue. The developer marketing metrics guide explains how to measure performance across the entire developer journey, from search visibility to activation and advocacy.

For a broader look at how SEO fits into developer acquisition, the complete developer marketing guide covers strategy, channels, GTM, and measurement end to end.

If you're a devtool company looking to grow organic traffic and developer adoption, we can put together a custom SEO strategy tailored to your product, audience, and growth goals. Speak with us.